Menu

Easy access to running containers on the local host network: Docker for Mac and Windows include a DNS server for containers, and are integrated with the Mac OS X and Windows networking system. On a Mac, Docker can be used even when connected to a very restrictive corporate VPN.

Train connection (source: ) For more on Docker networking, including an overview of multi-host networking, see the free ebook, by Michael Hausenblas. When you start working with Docker at scale, you all of a sudden need to know a lot about networking. As an introduction to networking with Docker, we’re going to start small, and show how quickly you need to start thinking about how to manage connections between containers. A Docker container needs a host to run on.

This can either be a physical machine (e.g., a bare-metal server in your on-premise datacenter) or a VM either on-prem or in the cloud. The host has the Docker daemon and client running, as depicted in, which enables you to interact with a on the one hand (to pull/push Docker images), and on the other hand, allows you to start, stop, and inspect containers. Simplified Docker architecture (single host) The relationship between a host and containers is 1: N.

This means that one host typically has several containers running on it. For example, reports that—depending on how beefy the machine is—it sees on average some 10 to 40 containers per host running. And here’s another data point: at Mesosphere, we found in various load tests on bare metal that not more than around 250 containers per host would be possible. No matter if you have a single-host deployment or use a cluster of machines, you will almost always have to deal with networking:. For most single-host deployments, the question boils down to data exchange via a versus data exchange through networking (HTTP-based or otherwise).

Although a Docker data volume is simple to use, it also introduces tight coupling, meaning that it will be harder to turn a single-host deployment into a multihost deployment. Naturally, the upside of shared volumes is speed. In multihost deployments, you need to consider two aspects: how are containers communicating within a host and how does the communication paths look between different hosts.

Both performance considerations and security aspects will likely influence your design decisions. Multihost deployments usually become necessary either when the capacity of a single host is insufficient (see the earlier discussion on average and maximal number of containers on a host) or when one wants to employ distributed systems such as Apache Spark, HDFS, or Cassandra. Distributed Systems and Data Locality The basic idea behind using a distributed system (for computation or storage) is to benefit from parallel processing, usually together with data locality. By data locality I mean the principle to ship the code to where the data is rather than the (traditional) other way around. Think about the following for a moment: if your dataset size is in the TB and your code size is in the MB, it’s more efficient to move the code across the cluster than transferring TBs of data to a central processing place.

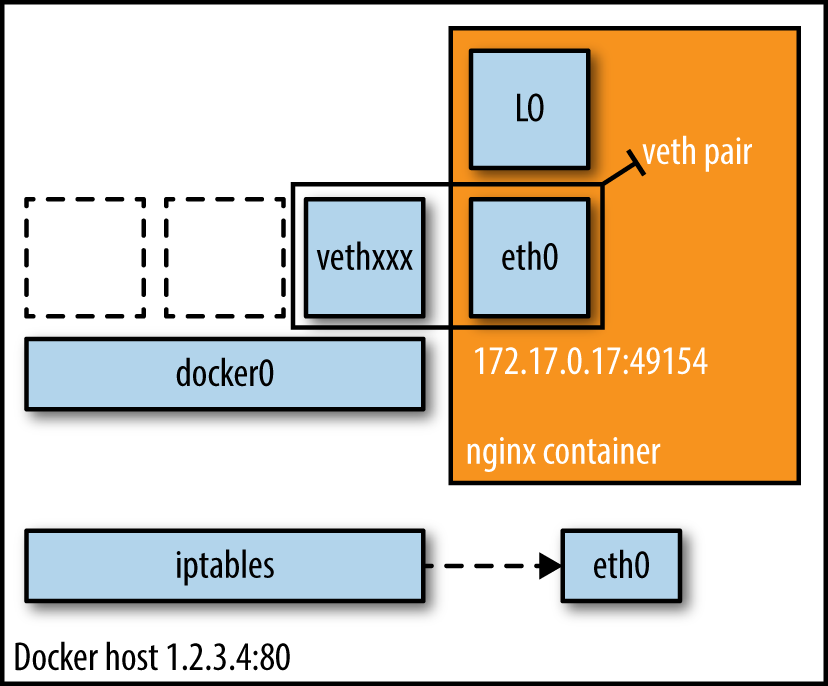

In addition to being able to process things in parallel, you usually gain fault tolerance with distributed systems, as parts of the system can continue to work more or less independently. Simply put, Docker networking is the native container SDN solution you have at your disposal when working with Docker. In a nutshell, there are four modes available for Docker networking: bridge mode, host mode, container mode, or no networking. We will have a closer look at each of those modes relevant for a single-host setup and conclude at the end of this article with some general topics such as security. Bridge Mode Networking In this mode (see ), the Docker daemon creates docker0, a virtual Ethernet bridge that automatically forwards packets between any other network interfaces that are attached to it.

By default, the daemon then connects all containers on a host to this internal network through creating a pair of peer interfaces, assigning one of the peers to become the container’s eth0 interface and other peer in the namespace of the host, as well as assigning an IP address/subnet from the to the bridge. Note Because bridge mode is the Docker default, you could have equally used docker run -d -P nginx:1.9.1 in. If you do not use -P (which publishes all exposed ports of the container) or -p hostport:containerport (which publishes a specific port), the IP packets will not be routable to the container outside of the host.

Bridge mode networking setup Host Mode Networking This mode effectively disables network isolation of a Docker container. Because the container shares the networking namespace of the host, it is directly exposed to the public network; consequently, you need to carry out the coordination via port mapping. Docker container mode networking in action $ docker run -d -P -net=bridge nginx:1.9.1 $ docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES eb19088be8a0 nginx:1.9.1 nginx -g 3 minutes ago Up 3 minutes 0.0.0.0:32769-80/tcp, 0.0.0.0:32768-443/tcp admiringengelbart $ docker exec -it admiringengelbart ip addr 8: eth0@if9: mtu 9001 qdisc noqueue state UP group default link/ether 02:42:ac:11:00:03 brd ff:ff:ff:ff:ff:ff inet.172.17.0.3./16 scope global eth0 $ docker run -it -net=container:admiringengelbart ubuntu:14.04 ip addr. 8: eth0@if9: mtu 9001 qdisc noqueue state UP group default link/ether 02:42:ac:11:00:03 brd ff:ff:ff:ff:ff:ff inet.172.17.0.3./16 scope global eth0 The result (as shown in ) is what we would have expected: the second container, started with -net=container, has the same IP address as the first container with the glorious auto-assigned name admiringengelbart, namely 172.17.0.3. No Networking This mode puts the container inside of its own network stack but doesn’t configure it.

Effectively, this turns off networking and is useful for two cases: either for containers that don’t need a network (such as batch jobs writing to a disk volume) or if you want to set up your custom networking. Docker no-networking in action $ docker run -d -P -net=none nginx:1.9.1 $ docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES d8c26d68037c nginx:1.9.1 nginx -g 2 minutes ago Up 2 minutes graveperlman $ docker inspect d8c26d68037c grep IPAddress 'IPAddress': ', 'SecondaryIPAddresses': null, And as you can see in, there is no network configured—precisely as we would have hoped for. You can read more about networking and learn about configuration options on the excellent.

Michael is a Developer Advocate at Mesosphere where he helps appops to build and operate distributed applications. His background is in large-scale data integration, Hadoop, NoSQL datastores, IoT, as well as Web applications and he's experienced in advocacy and standardization at W3C and IETF. Michael contributes to open source software (Kubernetes, Apache Mesos, Apache Myriad, etc.) and shares his experience with distributed operating systems and large-scale data processing through blog posts and public speaking engagements.

Hi, I've been trying, to no avail, to make work the following development scenario with docker-compose: Environment:. OS: OS/X 10.11.4. Docker versions (using Docker for Mac beta):. Engine: 1.11.1, build 5604cbe. Compose:. docker-compose version 1.7.1, build 0a9ab35.

docker-py version: 1.8.1. CPython version: 2.7.9. OpenSSL version: OpenSSL 1.0.1j 15 Oct 2014.

app: web application, running natively on host. Depends on database ( db). Impractical to run in a container; app build/serve takes 10x more than when natively on host. worker: headless application, running on a container.

Depends on database ( db). Depends on being able to hit the web app's ( app) REST API. db: database, running on a container Since app isn't a container, I need to expose db's port to the host machine, so I can access the db container from it. Here's what a simplified version of my docker-compose.yml file looks like. Version: '2 ' services: worker: build:. Links: - db db: image: mongo ports: - '7 ' docker-compose up works properly and then I run my app separately, with its DB connection string to localhost:27017.

App and worker can access db, no problem; the issue appears when worker wants to hit app's API: I can't reach the host machine by default. Still, my DB logs do list that it is receiving connections from 172.18.0.1 so I thought that should be the IP for my host machine from the default docker network created by compose. When attaching to the container and trying to curl though, I got no answer. Still, when checking ifconfig I didn't even see the docker0 interface, just eth0 and lo. Since I'm new to the networking layer of compose, I went through all the docs I could find and tried several things: My networks are: $ docker network ls NETWORK ID NAME DRIVER 2772b8a002ec bridge bridge 3243cfc5b4fb myprojectdefault bridge 07c1adccfe55 host host 91e27cd6d9f1 none null So I thought maybe using the default bridge network instead of the one created by docker-compose might shed some light. So I added networkmode: bridge to both services in docker-compose.yml.

# added networkmode: bridge version: '2 ' services: worker: build:. Links: - db networkmode: bridge db: image: mongo ports: - '7 ' networkmode: bridge This didn't produce any change except that the docker0 interface became visible from within the containers and that the IP reporter by the database was now 172.17.0.1 instead of 172.18.0.1; still no answer from the gateway IP in that network.

Finally, I tried making all services hook directly to the host network with networkmode: host. Also, I had to specify the connection string for the DB manually since container linking isn't allowed with this network mode. # set networkmode to host version: '2 ' services: worker: build:. Dependson: - db environment: - MONGOURL: mongodb://localhost:27017/test networkmode: host db: image: mongo ports: - '7 ' networkmode: host And here's the weird part: app now is unable to reach the db container. Docker ps yields that no port is available for the db container, even though ports is specified there: $ docker ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES xxxxxxxxxxxx XXXXXXXXXXXX '/bin/sh -c ' xx seconds ago Up xx seconds myprojectworker1 yyyyyyyyyyyy YYYYYYYYYYYY '/bin/sh -c ' xx seconds ago Up xx seconts myprojectdb1 And here's where I don't know how to move forward. Is this the expected behavior? My workaround for this is to manually specify my host's local IP through environment to the worker and using that IP from inside the app.

But that breaks whenever I switch networks or if I try to run this scenario on a different machine. Is there any other 'proper' way around this? Thanks in advance.

Thanks; I actually did come across that (very helpful!) set of articles while trying to work around this, but I sort of hit a hiccup when reaching the iptables section since I would like my solution to be Host-OS-agnostic (I need to cater for developers running Linux, Mac and Windows). I will check out the forums and x-post there, but I was hoping it was me doing something wrong instead of a hard limit. That been said, are there any good practices/concerns when using privileged: true?

I didn't find a lot about that on the docs/around and the article does mention it, though it says using it isn't a good idea. Thanks again The only way I could work around this is by manually injecting host's IP into the containers and referencing it from them that way. I've now been able to successfully containerise the app, so I can just use the alias hostname and it works, although I find it weird that this still works the way it does.

I'm not sure if it's of any use discussing this further, since I know the complete docker network stack is supposed to be revamped in the near future. You may close this if you like; thanks for your help!